Introduction: The Limits of the Cloud

For the last fifteen years, the prevailing wisdom in software engineering has been to push everything to the cloud. The centralized cloud computing model—dominated by hyperscalers like AWS, Google Cloud, and Microsoft Azure—offered infinite scalability, massive cost savings, and simplified management. Instead of maintaining expensive server racks in a back room, businesses could rent compute power thousands of miles away.

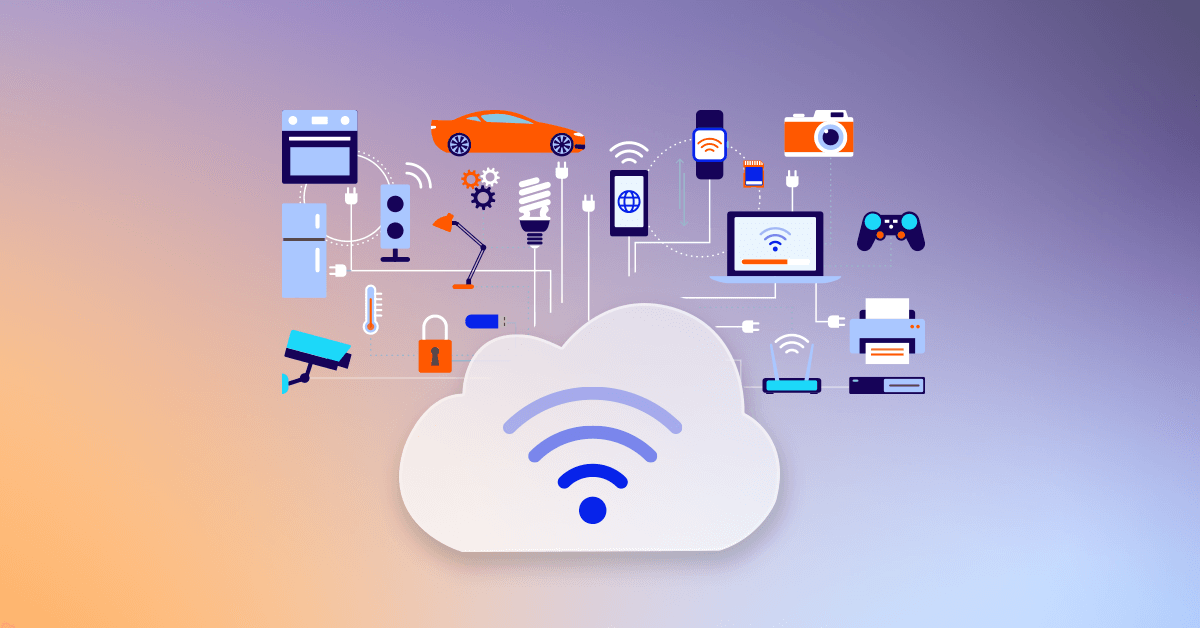

However, as the digital landscape evolves, the centralized cloud is hitting a wall built not by a lack of processing power, but by the immutable laws of physics. The explosion of the Internet of Things (IoT) has fundamentally changed how data is generated and consumed. We are no longer just connecting laptops and smartphones to the internet; we are connecting cars, factory robots, pacemakers, and entire city grids. These billions of devices generate unprecedented volumes of data that require instantaneous processing. Sending all that data back and forth to a centralized data center is no longer viable. The solution is Edge Computing—a paradigm shift that brings the computational power out of the cloud and directly to the data source.

The Physics of Latency

To understand why Edge Computing is necessary, we must look at latency: the time it takes for data to travel from a device to a server and back again.

Data travels through fiber optic cables at roughly two-thirds the speed of light in a vacuum. If an IoT device in New York needs to process data using a cloud server in Oregon, the physical distance is roughly 4,000 kilometers. Using the basic physics equation for time, $t = \frac{d}{v}$, the absolute minimum round-trip time for that data is roughly 40 milliseconds, purely based on the speed of light.

When you add the time it takes to route the data through multiple internet service providers, switchers, and the server’s actual processing time, that latency easily spikes to 100 or 200 milliseconds.

For a user loading a webpage, a 200-millisecond delay is unnoticeable. For an autonomous vehicle traveling at 70 miles per hour, 200 milliseconds is the difference between braking safely and a catastrophic collision. For a robotic arm performing microscopic surgery, that latency is unacceptable. Edge computing solves this by placing the processing power locally—often within the device itself or on a server just a few feet away—reducing latency to under 5 milliseconds.

The IoT Data Tsunami and Bandwidth Bottlenecks

Beyond latency, the sheer volume of data generated by modern IoT devices is staggering. A single modern smart factory equipped with thousands of acoustic sensors, thermal cameras, and vibration monitors can generate petabytes of raw data every single month.

If every factory attempted to stream 100% of its raw, uncompressed 4K video feeds and sensor logs to a centralized cloud for analysis, it would instantly saturate the world’s telecommunications infrastructure. Bandwidth is expensive, and transmitting “junk” data is a massive waste of resources.

Edge Computing introduces intelligent filtering. An AI model running on an Edge server on the factory floor can analyze the video feeds locally in real-time. It only sends an alert (a tiny kilobyte packet of data) to the central cloud if it detects an anomaly, such as a machine overheating. The vast majority of the routine, normal data is processed and instantly discarded at the edge, saving immense bandwidth costs.

The Architecture: Cloud, Fog, and Edge

The shift to the edge does not mean the death of the cloud; rather, it creates a tiered, hierarchical architecture for application deployment.

- The Edge Tier: This is where the physical world meets the digital world. It consists of the end devices themselves (smartphones, smart thermostats, autonomous drones) and local gateway servers. These devices handle real-time, ultra-low latency processing and immediate decision-making.

- The Fog Tier (Fog Computing): Coined by Cisco, the “Fog” sits between the Edge and the Cloud. These are regional data centers or cellular tower servers (like those utilized in 5G networks). They handle data that requires more processing power than an edge device can provide but still needs lower latency than a centralized cloud can offer. They aggregate data from thousands of local edge devices.

- The Cloud Tier: The centralized cloud remains the ultimate authority. It is no longer responsible for real-time reactions. Instead, it handles heavy, long-term tasks: storing historical data lakes, training massive machine learning models across aggregated global data, and managing the overarching business logic of the application. Once the cloud trains a new, smarter AI model, it pushes that updated model down to the Edge devices to execute.

Transforming Real-World Industries

The deployment of edge architectures is already revolutionizing several critical sectors:

- Autonomous Vehicles and Smart Grids: Self-driving cars are essentially rolling edge data centers. They process terabytes of LiDAR and camera data locally to make split-second driving decisions without relying on an active internet connection. Similarly, smart city grids use edge computing to monitor traffic lights and reroute power dynamically during localized outages.

- Healthcare and Wearables: Medical IoT devices, such as continuous glucose monitors and smart pacemakers, monitor patient vitals second-by-second. Edge computing allows these devices to instantly detect a cardiac event and trigger an alarm, rather than waiting to sync with a cloud server.

- Retail and Inventory Management: Modern brick-and-mortar stores utilize edge-powered camera systems to track inventory on shelves in real-time, instantly notifying staff when a product needs restocking or analyzing foot traffic patterns to optimize store layouts, all without streaming sensitive customer video feeds to an external server.

Security at the Edge: A Double-Edged Sword

The decentralization of compute power introduces complex new security dynamics.

On one hand, Edge Computing enhances privacy and data sovereignty. Because sensitive data (like a patient’s heart rate or a live video feed of a factory) is processed and discarded locally, it never traverses the public internet, drastically reducing the risk of it being intercepted by a man-in-the-middle attack or compromised in a massive cloud data breach.

On the other hand, Edge Computing vastly expands an organization’s attack surface. Instead of securing a handful of heavily fortified cloud data centers, security teams must now protect thousands of physically accessible IoT devices scattered across the globe. A hacker cannot easily walk into an AWS data center, but they can easily steal a smart sensor off the side of a building and attempt to extract its cryptographic keys to gain access to the broader network. Zero Trust architectures and strict hardware-level encryption (like Trusted Execution Environments) are mandatory when deploying edge applications.

Conclusion: The Symbiotic Future

The future of software deployment is not a strict choice between the Cloud and the Edge; it is a seamless continuum of compute. As 5G and eventually 6G networks blanket the globe, the line between local processing and remote processing will continue to blur. Developers must now architect applications that are inherently distributed, capable of dynamically shifting workloads between the centralized cloud for heavy lifting and the decentralized edge for split-second execution.